Success Story

Building an AI-Assisted Research Platform for a Switzerland-Based Clinical Research Firm

About the client

The client is a Switzerland-based life sciences company supporting pharmaceutical, biotech, and medtech organizations. The firm produces structured research updates and periodic newsletters by systematically aggregating and validating scientific literature from leading global databases. Its team reviews peer-reviewed publications, preprints, and clinical studies, translating them into concise, evidence-based insights. By consolidating findings across multiple sources, the organization enables clients to track emerging research, competitive activity, and market developments with clarity and confidence.

Country

Switzerland

Industry

Healthcare

Services Used

Business situation

Researchers at the life sciences firm relied on multiple scientific databases, such as PubMed, PMC, ScienceDirect, MDPI, bioRxiv, and arXiv, to compile research updates and newsletters for pharmaceutical clients. Each reporting cycle required searching across platforms separately, reviewing overlapping results, validating article relevance, and formatting findings into structured grids and periodic newsletters.

Because the same publication often appeared across multiple sources, researchers had to manually identify and remove duplicates. Queries sometimes returned thousands, or even millions, of results, requiring further refinement before meaningful analysis could begin. In addition, database-specific query limitations occasionally required researchers to break down complex searches into smaller components.

For certain workflows, this process consumed 20–30 hours per reporting cycle, particularly when preparing 15- to 30-day research briefs and structured newsletters.

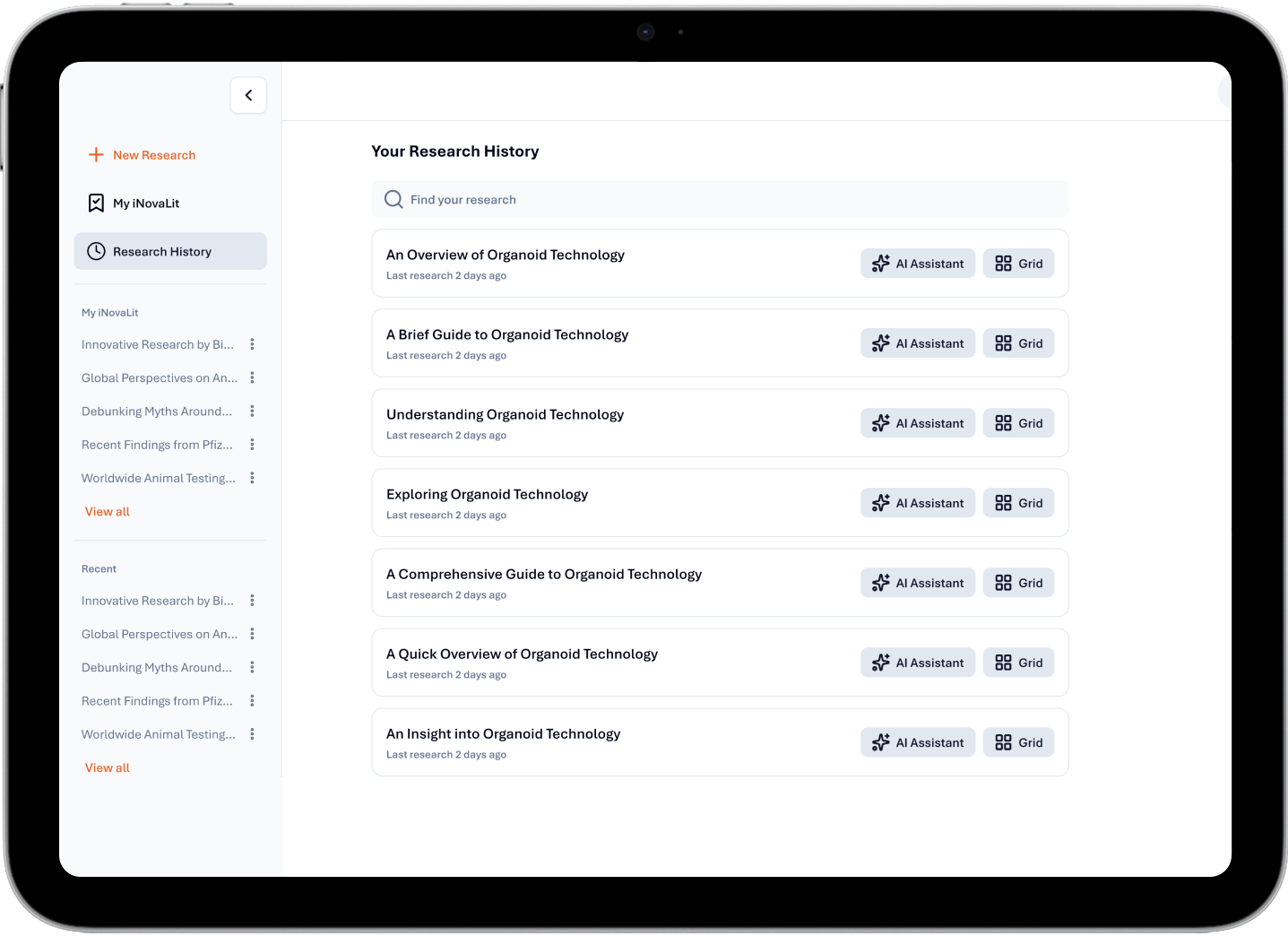

To address these challenges, the organization envisioned an AI-powered research assistant that could replicate its established workflow while automating repetitive tasks. The goal was not full automation, but a centralized environment where literature aggregation, validation, and report generation could occur within a single interface, while researchers retained full control over review and final outputs.

To bring this vision to life, the firm partnered with Daffodil Software to build a tailored AI-assisted research solution.

The following capabilities were defined during discovery:

Build a unified platform that aggregates references from multiple scientific databases, eliminating the need to run separate searches across fragmented systems.

Enable both natural-language queries and advanced structured queries within a single interface to support different researcher workflows.

Implement intelligent deduplication using identifiers such as DOI and publication metadata to detect overlapping entries across databases.

Validate and score articles for relevance before summary generation, while allowing researchers to adjust selections manually.

Automate repetitive tasks, including filtering, categorization into themes, and the generation of concise research summaries.

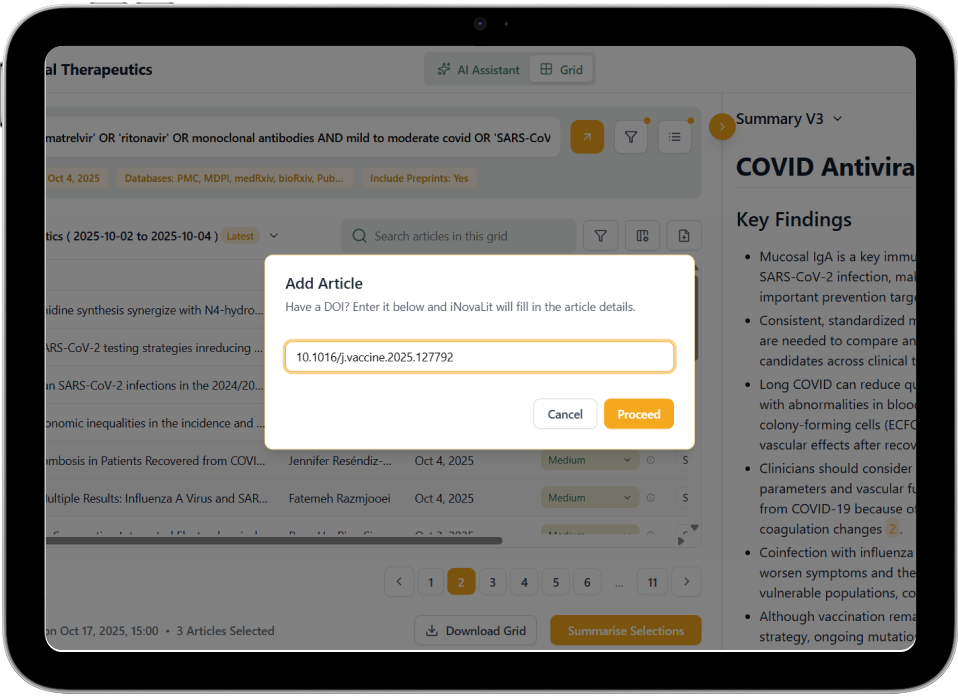

Provide researcher controls to toggle relevance, update metadata, categorize article status (new, updated, preprint, published), and manually add missed articles via DOI lookup.

Manage large result queries by prompting refinement or AI-assisted optimization to improve relevance and control processing costs.

Support structured output generation, including downloadable PDFs, reference lists, and customizable newsletter-style deliverables.

Track article status changes within reporting windows, such as preprints transitioning to published papers, and reflect updates in subsequent reports.

Design the platform as an AI-assisted research environment that enhances efficiency while preserving human oversight at every step.

The Solution

The client partnered with Daffodil to design and build a tailored AI-assisted research platform optimized for clinical and pharmaceutical research workflows. The system was engineered with Python and FastAPI powering backend services. Next.js enabled an interactive research interface and grid-based review workflows. MongoDB managed structured article metadata, and AWS provided scalable infrastructure.

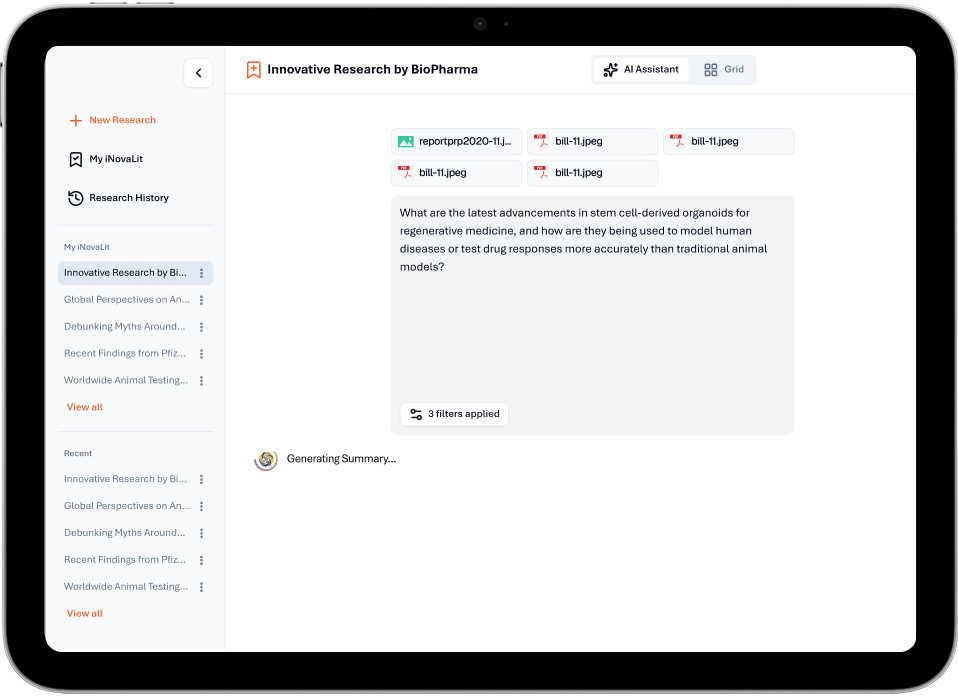

LangChain and OpenAI services supported LLM-assisted relevance scoring and summarization, with Playwright enabling controlled automation processes.

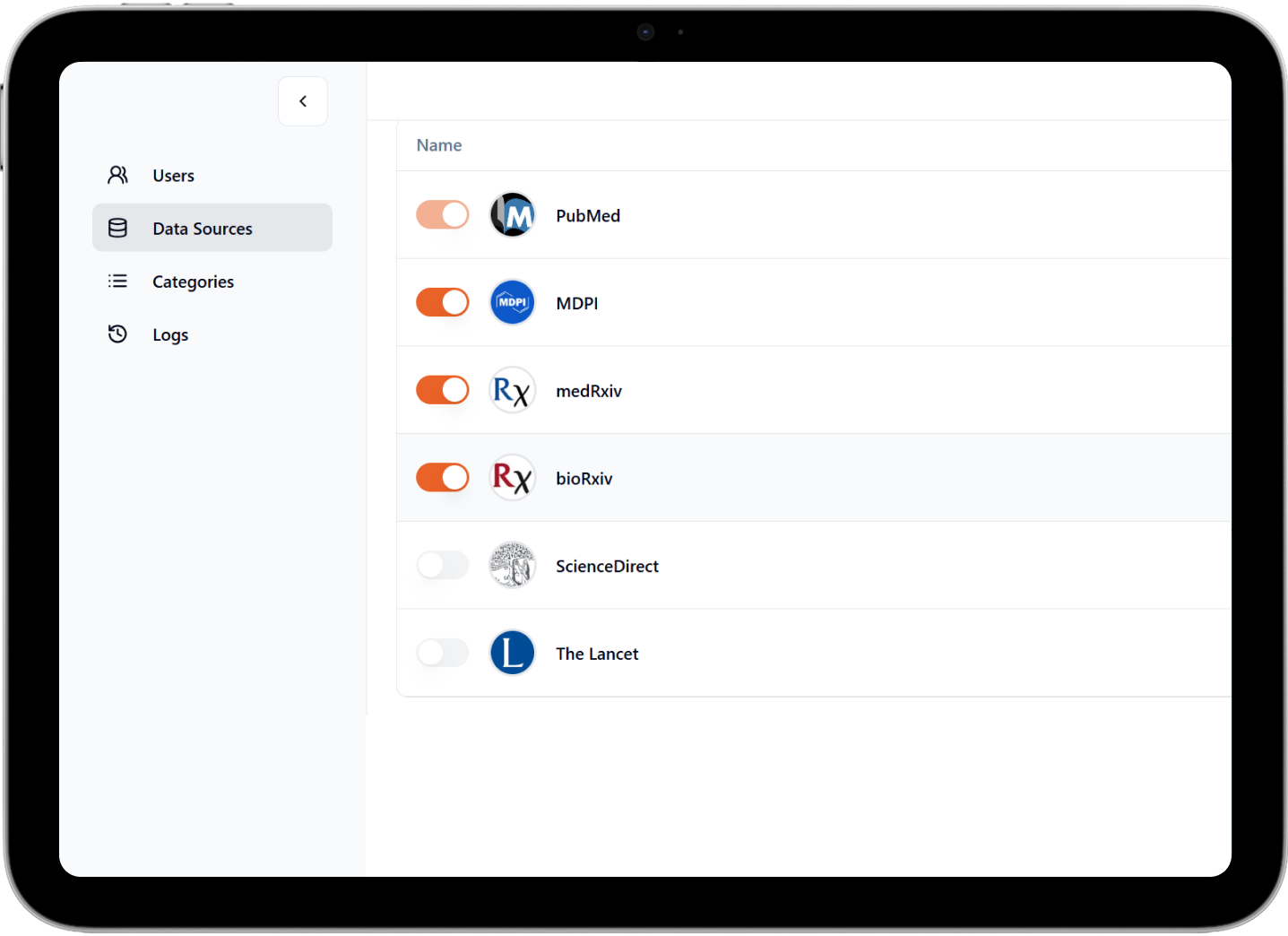

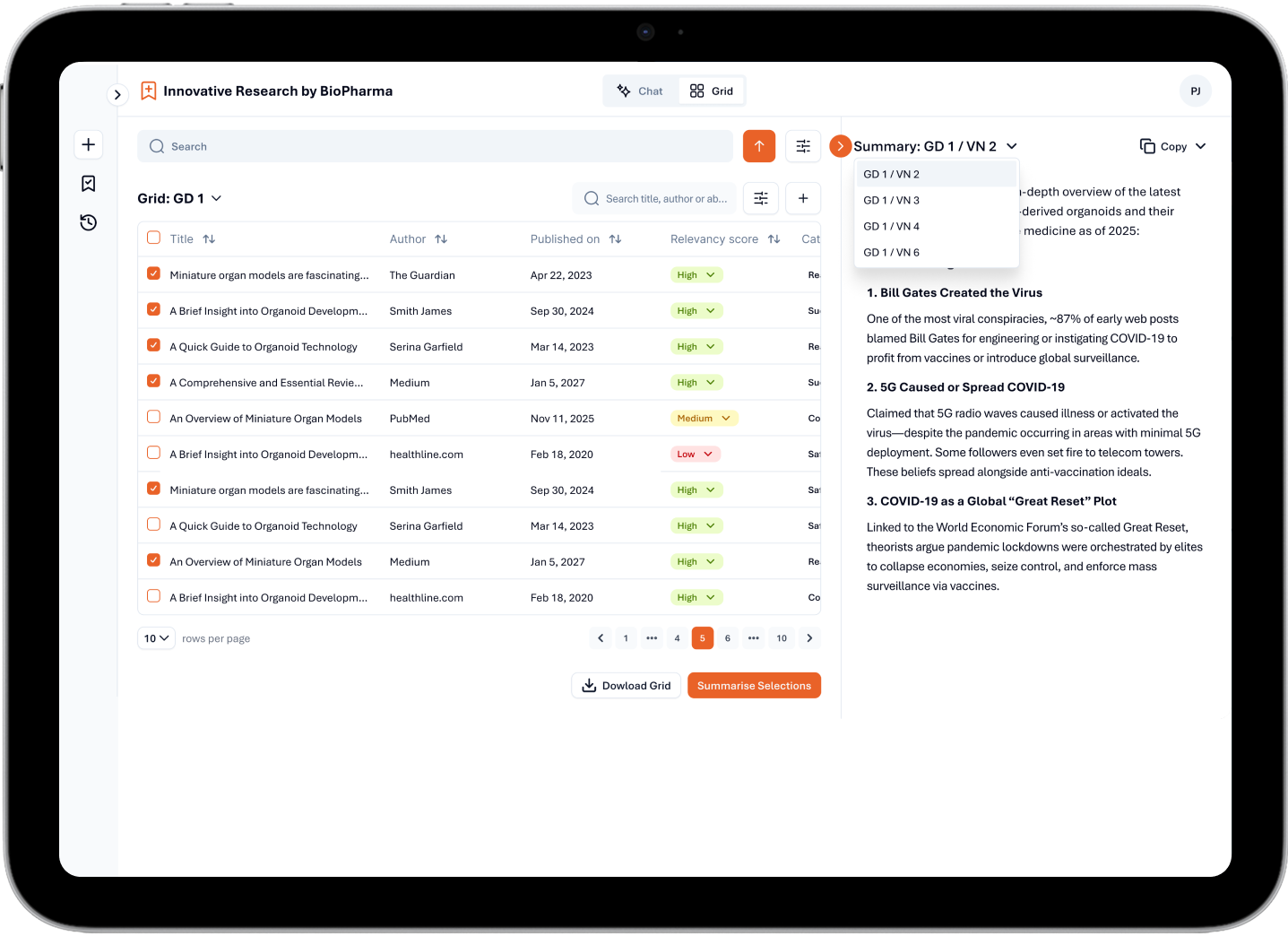

The platform aggregates references from leading scientific databases, including PubMed, PMC, ScienceDirect, MDPI, bioRxiv, arXiv, and MetaArchive, and consolidates them into a single interface. By centralizing retrieval and normalization, it eliminated the need to repeat identical searches across multiple sources and manually reconcile overlapping records.

The solution was designed around a Human-in-the-Loop model to balance automation with researcher oversight. The system streamlines repetitive steps such as identifying duplicate entries using DOI and publication metadata, validating results against search intent, assigning relevancy scores, categorizing articles into themes, and generating structured summaries from selected records.

The platform was designed with these core features:

Scientific references are aggregated across databases, including PubMed, PMC, ScienceDirect, MDPI, bioRxiv, arXiv, and MetaArchive through a unified ingestion layer. Metadata is normalized into a consistent structure and linked back to source records, enabling consolidated retrieval without hosting full-text articles.

Records are matched and merged across sources using identifiers (DOI, title similarity, author/date), removing duplicates before review. Each article is scored as High, Medium, or Low relevance using LLM-assisted abstract-to-query alignment, promoting the most pertinent studies for analyst attention while minimizing noise.

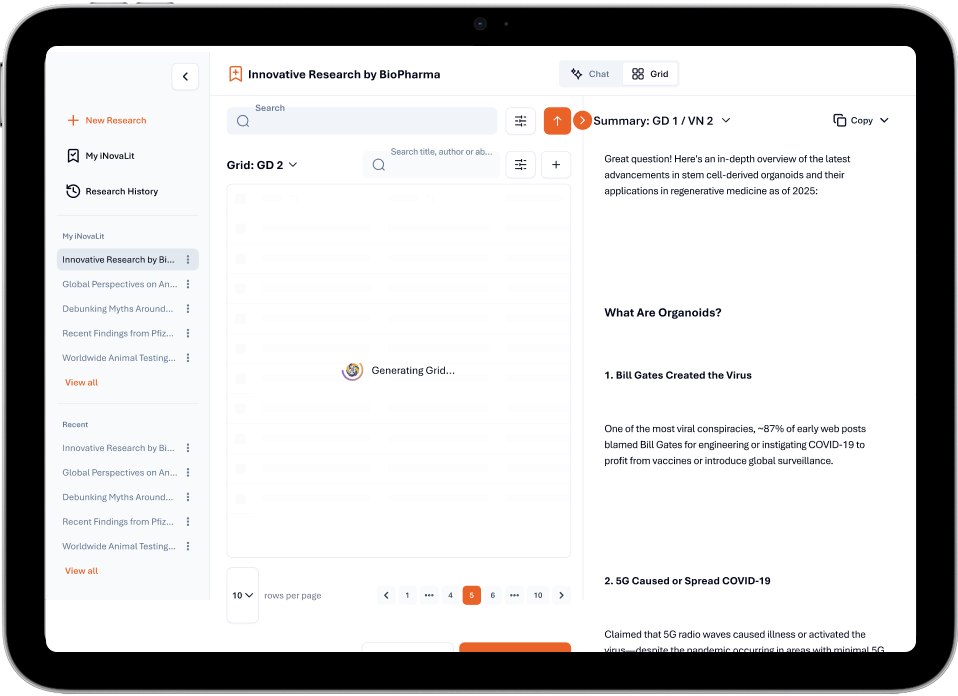

Grid View provides a spreadsheet-style layout with raw article data, metadata, filters, relevance tags, and on-demand extraction of objectives and conclusions for precise manual validation.

AI-Assisted View generates concise summaries by default using LLMs, surfacing study objectives, findings, and conclusions to speed comparative analysis without replacing expert judgment.

Articles are automatically classified into research themes to organize literature by topic and streamline downstream reporting. Pre-published records are tracked for status changes and revisions; when updates occur, the system flags changes and routes them to the appropriate section in recurring outputs (such as newsletters), preventing redundancy.

Search requests are processed through a single interface that accepts both natural-language prompts and structured PubMed-style syntax. Query filters and refinement prompts are applied before execution, enabling flexible retrieval while maintaining precision across diverse researcher workflows.

Missing publications can be manually added using DOI references. Public metadata is retrieved and appended to the dataset, preserving traceability and enabling seamless inclusion of critical studies not captured during automated aggregation.

Validated article selections are processed through AI-assisted summarization to generate concise, reference-linked briefs. Outputs retain structured formatting and source attribution, while allowing analysts to adjust article selections before finalizing summaries.

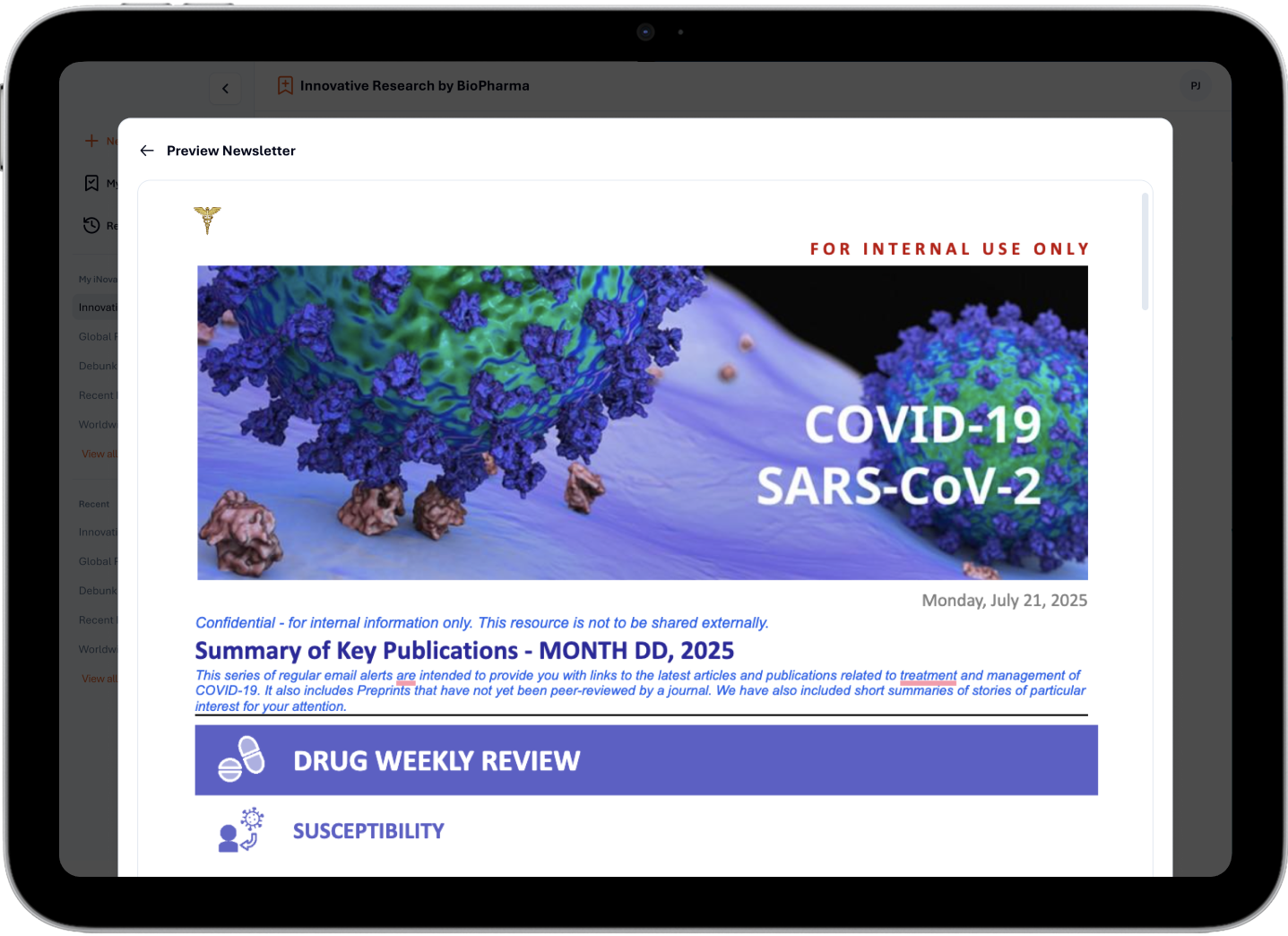

Reviewed results can be exported as consolidated grids, structured reports, or newsletters. Exports preserve citations, relevance markers, categories, and update flags, enabling consistent, client-ready deliverables without additional formatting work.

The Impact

The AI-assisted platform streamlined the client’s literature review and reporting workflows by automating repetitive steps such as aggregation, deduplication, validation, and summary preparation. For certain workflows, effort reduced from 20–30 hours to 4–8 hours, reflecting an overall productivity improvement of approximately 50%.

By consolidating references from multiple scientific databases into a single interface, the platform eliminated the need to repeat queries across fragmented sources while maintaining source traceability. Automated duplicate detection and relevance scoring reduced manual screening effort, allowing researchers to focus on interpretation rather than data cleanup.

Reporting cycles were simplified by enabling structured weekly report generation, which supported recurring 15–30 day client deliverables. Outputs could be generated as reference-linked summaries, PDFs, or customizable newsletters with categorized articles and status indicators.

This balanced model of AI-assisted processing and researcher oversight improved efficiency while preserving analytical control and reliability expectations.

Related Case Studies

Enhancing development capabilities for a premier fitness app company through team augmentation services

Developing a feature-rich Gen-AI platform for the UK’s leading global investor in sustainable infrastructure.